Securing the Core, Ignoring the Door: The Repo Trust Trap

Research Team

There’s an unwritten rule in software development: if it’s in the repo, it’s probably safe. Developers trust example code, CI scripts, and bundled tools almost unconditionally. After all, they come from the maintainers. They’re part of the project. They must have been reviewed.

Except, often, they haven’t.

During recent research, I found OS Command Injection vulnerabilities in the developer tooling of two separate open-source projects. Not in the products themselves, but in the scripts and examples that ship alongside them. The kind of code that developers copy, adapt, and run in their own pipelines without a second thought.

This post is about that blind spot, and why it might be the most underrated attack surface in the modern software supply chain.

The Blind Trust Problem

When a developer integrates a new library, the first thing they do is look at the examples. They check the /tools directory. They read the README and follow the setup scripts.

This code occupies a unique trust zone. It’s inside the official repository, so it inherits the project’s reputation. It’s not the core product, so it rarely gets the same security scrutiny. And it’s meant to be copied and adapted, so vulnerabilities in it propagate to every downstream user.

The result is a category of code that is simultaneously the most trusted and the least audited. And when that code interacts with the shell (which developer tools frequently do) the consequences can be severe.

We’ve basically built elaborate gates around the front door with dependency scanning, SBOMs, signed packages… while leaving the side door wide open with tool scripts, CI helpers, and example code.

Case Study 1: Envoy, CI Formatting Tool to Root Shell

Envoy is one of the most widely deployed service proxies in the cloud-native ecosystem. It powers the data plane for Istio, is a CNCF graduated project, and is trusted by organizations handling massive-scale production traffic. 25k+ GitHub stars. Used by Google, Lyft, IBM, Salesforce. Any CI compromise here ripples far.

The vulnerability I found wasn’t in Envoy’s proxy code. It was in tools/code_format/check_format.py, a Python script used in CI to enforce code formatting standards on incoming pull requests.

The Vulnerable Pattern

The script constructs shell commands by directly interpolating file paths into format strings, then executes them via os.system() and subprocess.check_output(shell=True) without any sanitization.

The file_path variable flows directly from the list of changed files in a pull request. There are six distinct injection points across the script in fix_build_path(), check_build_path(), clang_format(), and the shared execute_command() sink.

The normalize_path() function only adjusts path prefixes (./). It performs zero sanitization of shell metacharacters.

The Attack Chain

The exploitation path is straightforward:

An attacker forks the Envoy repository.

They create a file with a malicious filename, such as:

They open a pull request. CI triggers automatically.

check_format.pyprocesses the file list. The malicious filename reachesclang_format(), and after f-string interpolation the shell sees:The shell splits on

;and executes the injected command with full CI runner privileges.

No human review needed. No special permissions. Just a pull request.

What An Attacker Actually Gets

This isn’t theoretical. On a typical GitHub Actions CI runner, successful exploitation gives an attacker:

GITHUB_TOKEN: push access to the repository, ability to create releases, modify workflows.Deployment secrets: AWS keys, Docker registry credentials, signing keys, anything stored in GitHub Secrets and exposed to the workflow.

Lateral movement: access to internal networks if the runner is self-hosted.

Supply chain poisoning: the ability to inject malicious code into build artifacts, container images, or release binaries that ship to every Envoy user.

A single pull request. Root on CI. Keys to the kingdom.

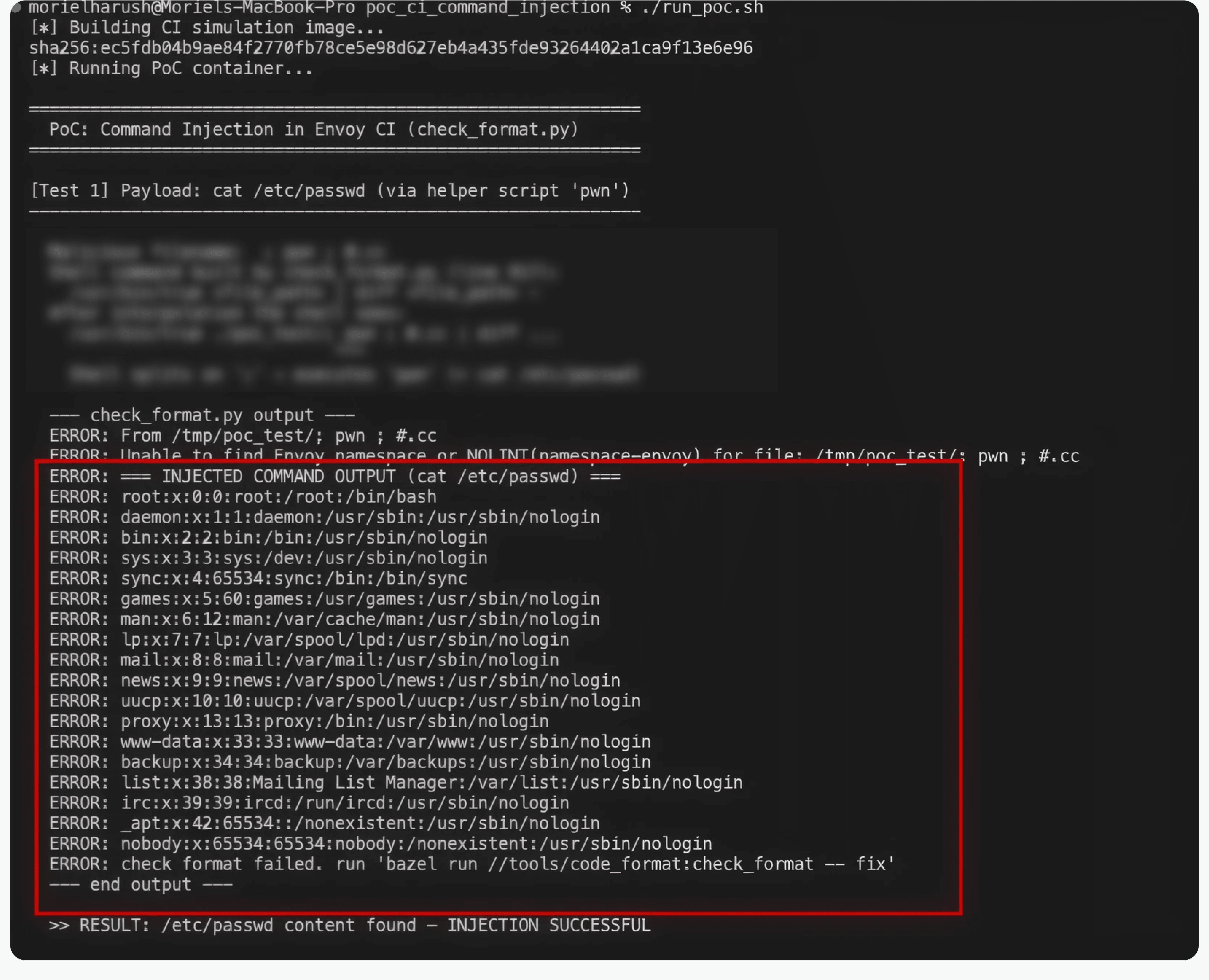

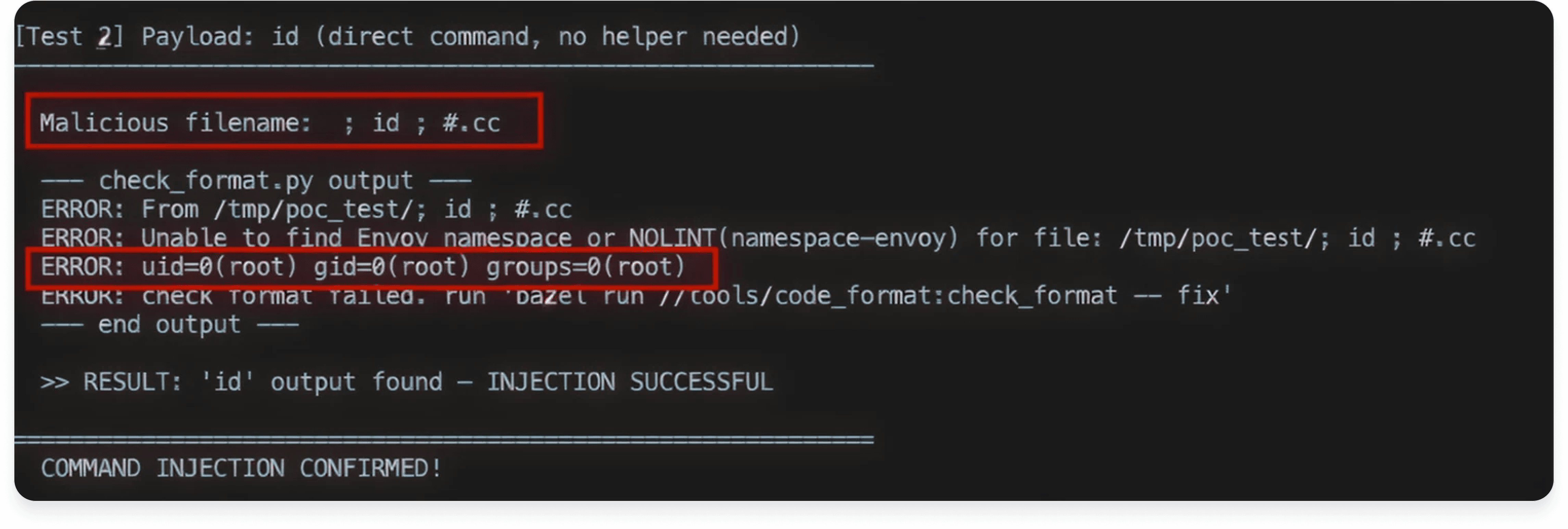

Proof of Concept

I built a self-contained Docker-based PoC that simulates a CI runner processing a file with a crafted filename. The results speak for themselves:

Root-level execution on the CI runner. From a filename.

Case Study 2: CASL, Example Tool to Command Injection

CASL is a popular JavaScript authorization library used to manage permissions in web applications. It’s well-regarded and widely adopted.

Again, the vulnerability wasn’t in CASL’s core authorization logic. It was in a tool script, tools/sitemap.xml.js, a sitemap generator that ships with the project as a development utility.

The Vulnerable Pattern

The getLastModified function uses child_process.exec to run a git command, directly concatenating the path parameter into the command string:

Since exec spawns a full shell, any shell metacharacters in path (like ;, &, |, or $()) are interpreted. And the path values come from configuration files like routes.yml, which can be modified by contributors or influenced in CI/CD environments.

The Attack

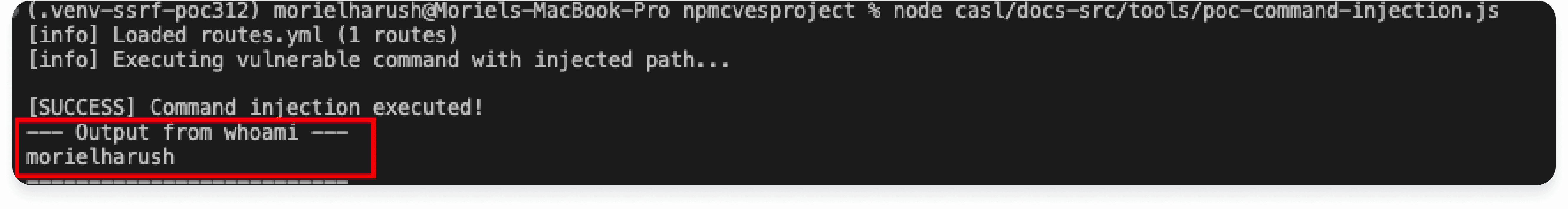

By injecting app/en.yml; whoami 1>&2; # as a path value, the shell executes:

The git command runs normally, then whoami executes, and # comments out the rest. The output confirms code execution.

Proof of Concept

Why This Matters

A developer integrating CASL who needs a sitemap generator might look at the project’s tools directory and think: “They already have one, let me just use this.” They copy the script, maybe adapt it slightly, wire it into their build pipeline, and move on.

They’ve just imported a command injection vulnerability into their CI/CD infrastructure.

The Pattern: Shell + String Interpolation + Trust

Both vulnerabilities share the exact same root cause:

Component | Envoy | CASL |

|---|---|---|

Language | Python | JavaScript |

Dangerous API |

|

|

Input source | File paths from PR diff | Path values from config/routes |

Sanitization | None | None |

CWE | CWE-78 | CWE-78 |

The formula is always the same:

This isn’t a novel attack technique. OS Command Injection is well-understood. It has its own CWE entry (CWE-78), it’s in the OWASP Top 10, and every security course covers it in week one.

And yet it keeps showing up. Specifically in developer tools. Because nobody audits them.

The Broader Implication: Supply Chain Through the Side Door

The security community has invested heavily in securing dependencies with SBOMs, signed packages, dependency scanning, reproducible builds. But developer tools and example code represent a parallel supply chain that largely goes unexamined.

Think about it:

CI scripts run with elevated privileges and access to deployment secrets.

Example code is copied and pasted into production systems.

Tool scripts are integrated into build pipelines where they process untrusted input.

An attacker doesn’t need to compromise a package registry or poison a dependency. They just need to exploit a tool that’s already trusted because it lives in the repo.

The Attack Surface Nobody’s Mapping

If you’re an attacker, this is basically the dream scenario:

Low barrier to entry. Just open a PR. No need to compromise credentials, social-engineer maintainers, or hijack packages.

High privilege execution. CI runners typically have access to secrets, signing keys, and deployment credentials.

Low detection probability. Security scanners focus on

src/, nottools/orexamples/. Code review on PRs rarely scrutinizes filenames.Plausible deniability. A weird filename could be dismissed as an accident or encoding issue.

Massive blast radius. One compromised CI pipeline can taint every artifact the project produces.

This is the software supply chain equivalent of walking in through the service entrance while everyone’s guarding the lobby.

Quick Audit: Is Your Repo Vulnerable?

Run these one-liners on any repository to find potential CWE-78 hotspots in tool and example code:

Python:

JavaScript / TypeScript:

If either returns results where user-controlled data (filenames, paths, config values, environment variables) flows into those calls, you have a problem.

Recommendations

For library maintainers:

Apply the same security review process to

/tools,/examples, and CI scripts as you do to core code.Never use

shell=True(Python) orchild_process.exec(Node.js) with interpolated strings. Use list-based arguments withsubprocess.run()orchild_process.execFile().If shell execution is unavoidable, sanitize inputs with

shlex.quote()(Python) or dedicated escaping functions.Add CODEOWNERS rules for CI and tooling directories so changes require explicit security-aware review.

For developers consuming libraries:

Do not blindly copy tool/example code into your projects or pipelines.

Audit any script that runs in CI, especially if it processes filenames, paths, or user-influenced configuration.

Grep for

os.system,shell=True,child_process.exec, and similar patterns in any code you import.Treat anything in

/toolsor/examplesas untrusted third-party code, because in terms of audit coverage, that’s exactly what it is.

For security teams:

Expand SAST rules to cover tool and example directories, not just

src/.Treat CI pipeline code as security-critical infrastructure.

Include developer tooling in your threat model.

When evaluating open-source projects, audit the full repo, not just the package that gets installed.

Key Takeaways

The most dangerous code is the code nobody reviews. Core libraries get audited. Tools and examples get a pass. Attackers know this.

Developer tools are a supply chain vector. When a CI script runs os.system() on pull request data, it’s as dangerous as any RCE in production. Maybe more so, because it has access to secrets and deployment credentials.

Trust is not transitive. Just because a project is well-maintained doesn’t mean every file in its repository has been security-reviewed. A CNCF-graduated project and a popular npm package both had the same textbook vulnerability hiding in their tooling.

The fix is almost always trivial. In both cases, replacing shell string interpolation with parameterized execution would have eliminated the vulnerability entirely. The cost of prevention is negligible compared to the cost of exploitation.

Your CI runner is a production server. It has secrets. It has network access. It builds and signs artifacts that ship to users. Treat it like the high-value target it is.

If you found this research useful, consider auditing the tool directories of the open-source projects you depend on. The next CWE-78 might be hiding in a script that’s been in the repo since day one, trusted by everyone, reviewed by no one.